Data Warehousing in Microsoft Fabric: The Complete 2026 Production Guide

What is Data Warehousing in Fabric?

Data Warehousing in Fabric is an enterprise-grade, SaaS analytics platform that decouples compute from storage using a serverless SQL engine and OneLake. Unlike traditional warehouses, it stores data in the open Delta Parquet format, allowing seamless interoperability between T-SQL, Spark, and Power BI Direct Lake mode without data movement. It is designed to unify data engineering and business intelligence into a single, governed layer.

Context Why Data Warehousing in Fabric matters for modern analytics

In Microsoft Fabric, Data Warehouse is an enterprise‑scale relational warehouse on a data lake foundation built on open Delta tables rather than proprietary storage, which enables sharing between data engineers and business users without compromising governance or security.

💡 Thinking about starting a small side income online?

Many creators start with simple tools and workflows — no investment required.

See how creators do it → CreatorOpsMatrix.comFabric Data Warehouse is designed for curated star or snowflake schemas, governed corporate data marts, and semantic models for BI, providing full multi‑table ACID transactions, T‑SQL programmability, and tight Power BI integration so that analytics teams can move from ingestion to trusted insights in one SaaS experience.

Stack Core architecture: Warehouse, Lakehouse & SQL analytics endpoint

Fabric supports two main warehousing items: the Warehouse item and the SQL analytics endpoint of the Lakehouse. Both run on the same distributed SQL engine and Delta Lake storage, but target slightly different personas and patterns.

Warehouse item (Fabric Data Warehouse)

Enterprise‑grade relational warehouse with full DDL/DML support (T‑SQL), materialized views, stored procedures, and multi‑table ACID transactions, ideal for curated fact/dimension models and governed BI data marts.

SQL analytics endpoint of the Lakehouse

Auto‑generated SQL endpoint over Lakehouse Delta tables that allows creating views, inline TVFs, and stored procedures, plus applying SQL permissions, without directly modifying underlying lake data.

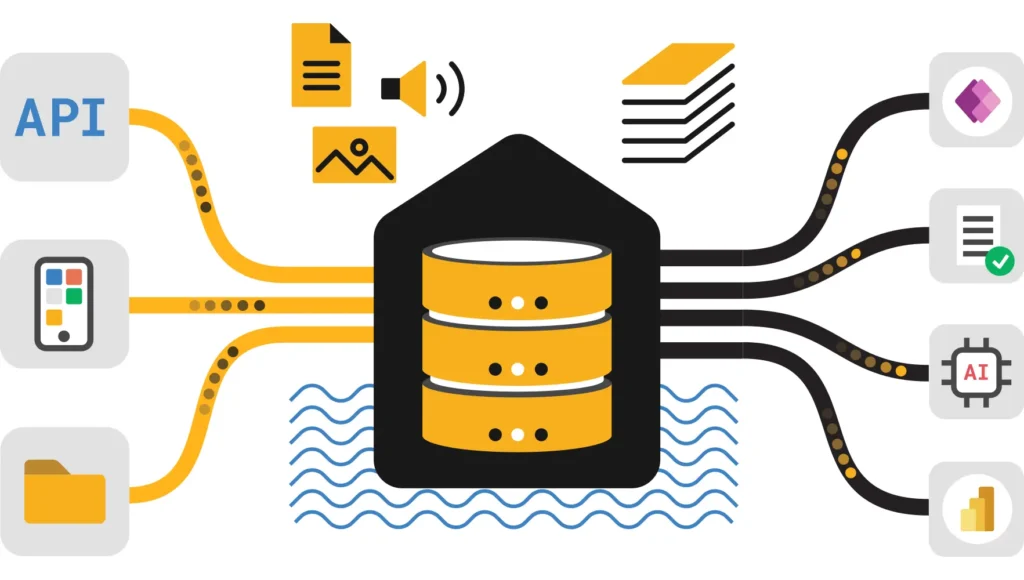

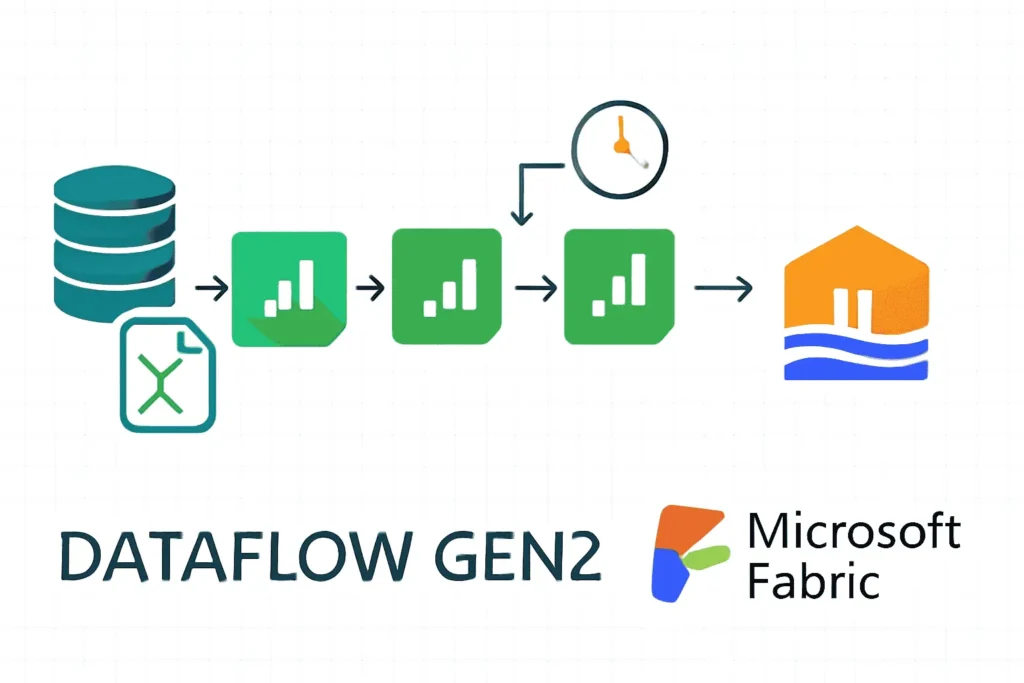

All warehouse data is stored as Delta tables in OneLake Files, and you can ingest data via COPY INTO, Pipelines, Dataflows, Spark, or cross‑database CTAS/INSERT…SELECT, giving you multiple ingestion paths without locking into a single engine.

Warehouse vs Lakehouse: when to choose what

Choose Data Warehouse when…

- You want a managed, enterprise‑scale SQL surface with “no‑knobs” performance and minimal setup.

- Workloads are largely structured/semi‑structured with defined star or snowflake schemas.

- Teams prefer T‑SQL (DBAs, BI developers) and need strong transactional guarantees.

Choose Lakehouse when…

- You need a large repository of mixed or unstructured data and Spark as the primary transformation tool.

- You want to experiment freely, then expose curated layers through the SQL analytics endpoint.

- You will later add a Warehouse for specific governed marts (coexistence is supported).

For a deeper comparison of Lakehouse vs Data Warehouse in Fabric, including performance and cost trade‑offs, see Fabric Lakehouse vs Data Warehouse: Best Practices.

Plan Designing a Fabric Data Warehouse: layers, schema, and modeling patterns

Design your Data Warehouse around clear layers in OneLake and Warehouse to enable incremental processing, governed semantics, and high‑quality BI models while preserving raw lineage for auditability.

- Raw / Landing layer — preserve original files immutably (e.g., CSV, JSON, Parquet) in Lakehouse or mirrored sources for compliance and replay.

- Bronze / Staging — lightly clean, standardize schema, and enforce basic types to feed downstream transformations.

- Silver / Refined — normalize, dedupe, and apply business rules, often in Lakehouse or notebook‑driven Spark jobs.

- Gold / Warehouse — denormalized Warehouse tables (facts/dimensions) tuned for T‑SQL queries, materialized views, and semantic models.

Modeling guidance for Data Warehousing in Fabric

Prefer star schemas with narrow fact tables and conformed dimensions to simplify DAX and reduce query complexity for Power BI, and partition facts by date or business period to align with common filters and optimize scan ranges.

When ingesting from multiple systems, use surrogate keys in dimensions, maintain business keys for lineage, and adopt a consistent naming convention (for example dw_ prefix for Warehouse facts and dimensions). This improves discoverability in the Fabric item catalog and Power BI semantic models.

Build Implementation patterns with T‑SQL, Delta Lake & Spark

Fabric Data Warehouse is primarily developed using T‑SQL on top of Delta tables, but you can also bulk load and transform data from Spark notebooks and pipelines, and then expose curated objects through Warehouse or the SQL analytics endpoint.

Use notebooks and Lakehouse for heavy ELT, complex joins, and data science, then materialize final Warehouse tables with CTAS or MERGE patterns for governed serving layers.

Create a curated fact table using CTAS

Delta MERGE pattern for idempotent incremental loads

Hybrid pattern: Spark notebooks + Warehouse

For detailed Spark transformation patterns, see Transform Data Using Notebooks in Fabric.

Tune Performance tuning & cost optimization for Data Warehousing in Fabric

Fabric’s distributed query engine and autonomous workload management provide “no‑knobs” performance, but physical design and workload patterns still matter for cost and latency.

Combine good physical modeling (distribution, partitioning, clustering) with Warehouse sizing, result caching, and background maintenance (OPTIMIZE) to keep queries fast and predictable.

Key tuning techniques

- Distribution & partition strategy — distribute large fact tables by high‑cardinality columns (like customer or order) and partition by date, minimizing skew and improving parallelism.

- OPTIMIZE & Z‑ORDER — periodically compact small Delta files and Z‑ORDER on common filter columns (such as customer_id or date) to reduce I/O and speed up selective queries.

- Materialized views for hot queries — use materialized views for expensive aggregations that power dashboards with high concurrency.

- Monitor top queries — regularly review query history and capacity metrics to find long‑running workloads and refactor them.

- Use Direct Lake for BI where possible — combine Warehouse with Direct Lake models in Power BI to avoid duplicated storage and improve freshness for large semantic models.

For deeper performance tuning beyond Warehouse (including Spark shuffle optimization and capacity planning), see Fabric Data Warehouse Optimization and Fabric Capacity Optimization.

Run Operationalizing Data Warehousing in Fabric: pipelines, monitoring & CI/CD

Operational excellence means reliable schedules, robust testing, and rich observability across pipelines, notebooks, and Warehouse so that SLAs can be met consistently.

Use Data Pipelines to orchestrate Warehouse loads, Spark notebooks, and Dataflows, and combine them with alerting and deployment workflows to automate the full lifecycle.

Recommended operational practices

- Pipeline‑driven ELT — orchestrate copy activities, notebooks, and Warehouse stored procedures with retry and alerting policies defined per pipeline.

- Parameterization — pass

run_date, environment, and source system IDs into activities so the same pipeline/templates work across dev, test, and prod. - Testing in PRs — run integration tests on sample datasets (row counts, constraints, DAX checks) before merging Warehouse changes to main branches.

- Deployment gating — require approvals and automated quality signals before deploying migrations or schema changes.

For step‑by‑step orchestration examples, see Data Pipelines in Fabric.

Trust Security, governance & observability for Data Warehousing in Fabric

Because Fabric centralizes Warehouse, Lakehouse, and BI on OneLake, strong identity, access control, lineage, and monitoring are essential to keep data secure and compliant.

Use Microsoft Entra ID for authentication, workspace and item permissions for coarse‑grained control, and row/column policies at the SQL layer for fine‑grained protection.

Key governance controls

- Workspace RBAC — assign Admin/Member/Contributor/Viewer roles to control who can create or modify Warehouse, pipelines, and notebooks.

- Object‑level security — manage permissions on Warehouse tables, views, and procedures via T‑SQL GRANT/REVOKE, and use row‑level filters when needed.

- Column masking — mask or hash PII columns, or expose only masked views to downstream consumers.

- Lineage & catalog — register and document Warehouse objects so impact analysis is possible when making changes.

For a dedicated governance deep‑dive, including Purview integration and security patterns, see Microsoft Fabric Data Governance Tutorial.

Apply Use cases & migration recipes for Data Warehousing in Fabric

Enterprises use Data Warehousing in Fabric to modernize BI platforms, consolidate legacy warehouses, and power AI‑ready analytics with Direct Lake, Copilot, and RAG solutions.

Modern BI warehouse for retail

Ingest POS, e‑commerce, and inventory feeds into Lakehouse raw zones, refine them with notebooks, and publish curated sales, customer, and product facts into Warehouse. Then build Direct Lake or Warehouse‑connected Power BI models so business teams get fresh, governed metrics with predictable performance.

Lift‑and‑shift from Synapse or SQL Server

- Inventory schemas and workloads in the source warehouse, prioritizing star/snowflake models and critical reports.

- Use the Fabric Migration Assistant for Data Warehouse to move schema and data from dedicated SQL pools, SQL Server, or other SQL engines.

- Land historical data into OneLake Delta tables, then stitch them into Warehouse objects, validating row counts, keys, and DAX calculations.

- Cut over Power BI models and semantic layers to the Fabric Warehouse or Direct Lake once validation passes.

Debug Troubleshooting & Error Codes

Even with a robust design, production issues happen. Below are common error codes you might encounter in Fabric Data Warehouse and how to resolve them.

| Error / Symptom | Potential Cause | Resolution |

|---|---|---|

| Error 24801: Query Cancelled | Capacity throttling due to burst usage exceeding limits. | Check Fabric Metrics App. Optimize T-SQL queries (avoid SELECT *). Smooth workloads over 24 hours or upgrade F-SKU. |

| Transaction Log Full | Delta log limit reached during massive DML operation. | Batch your DELETE or UPDATE operations into smaller chunks rather than running a single transaction on billions of rows. |

| Direct Lake Fallback | Views containing unsupported T-SQL logic or high complexity. | Materialize views into physical tables. Avoid non-deterministic functions in views used by Direct Lake. |

| 110802: OpenRowset Path | Incorrect path or permissions to OneLake file. | Verify the ABFSS path format and ensure the identity has ‘Read’ permissions on the Lakehouse item. |

Migrate Detailed Migration Guide: Synapse to Fabric

Migrating from Azure Synapse Dedicated SQL Pools to Fabric requires understanding the architectural differences. While T-SQL is 95% compatible, the storage layer is different.

Step 1: Schema Adaptation

In Synapse, you explicitly define `DISTRIBUTION` (Hash, Round_Robin). In Fabric, remove these keywords. Fabric manages distribution automatically based on data volume and query patterns. Remove `CLUSTERED COLUMNSTORE INDEX` definitions; Fabric uses Parquet compression by default.

Step 2: Data Movement Strategy

Do not use INSERT INTO … SELECT for migrating terabytes of data across linked servers. Instead:

- Use CETAS in Synapse to export data to ADLS Gen2 as Parquet.

- Create a Shortcut in Fabric OneLake to that ADLS folder.

- Use COPY INTO in Fabric Warehouse to ingest the data at high speed.

Step 3: Stored Procedure Refactoring

Review stored procedures for legacy syntax like `CTAS` with distribution options. Convert them to standard `CREATE TABLE` or Fabric-compatible `CTAS`. Remove any logic related to manual index maintenance, as Fabric’s auto-optimization handles this.

FAQ Frequently asked questions about Data Warehousing in Fabric

What role does Delta Lake play in Data Warehousing in Fabric?

Fabric Warehouse data is stored in Delta tables (Parquet with a transaction log), providing ACID transactions, time‑travel, and efficient upserts while remaining open and shareable with Spark and other engines.

When should I use notebooks vs Warehouse SQL for transformations?

Use notebooks (Spark) for heavy, distributed transformations, data science, and complex pipelines; use Warehouse SQL for serving curated models, doing incremental loading, and powering BI queries that need low latency and strong governance.

How can I make schema changes safely in a Fabric Data Warehouse?

Apply schema evolution in stages: test changes in non‑production workspaces, maintain migration scripts in source control, run regression tests on representative data, and use PR approvals before applying changes to production Warehouse.

How do I control costs for Data Warehousing in Fabric?

Right‑size capacity, avoid unnecessary full reloads (use incremental MERGE), optimize storage layout, schedule maintenance jobs off‑peak, and monitor capacity metrics to detect inefficient queries or data models.

How does Data Warehousing in Fabric support AI and advanced analytics?

Warehouse and Lakehouse share OneLake and Delta, so you can use Warehouse for curated BI and Lakehouse for AI patterns like RAG or Copilot‑assisted modeling, while keeping a single source of truth for your enterprise data.

Links References & further reading for Data Warehousing in Fabric

- Data Warehousing in Fabric (UltimateInfoGuide)

- Fabric Lakehouse Tutorial

- Transform Data Using Notebooks

- Data Pipelines in Fabric

- Microsoft Fabric Data Warehouse Optimization

- Microsoft Learn: What is Data Warehousing in Microsoft Fabric?