Transform Data Using Notebooks in Microsoft Fabric

Complete 2026 guide to transform data using notebooks. Master production patterns, Delta Lake merge operations, performance optimization, and real-world recipes with official Microsoft best practices.

What does it mean to Transform Data Using Notebooks?

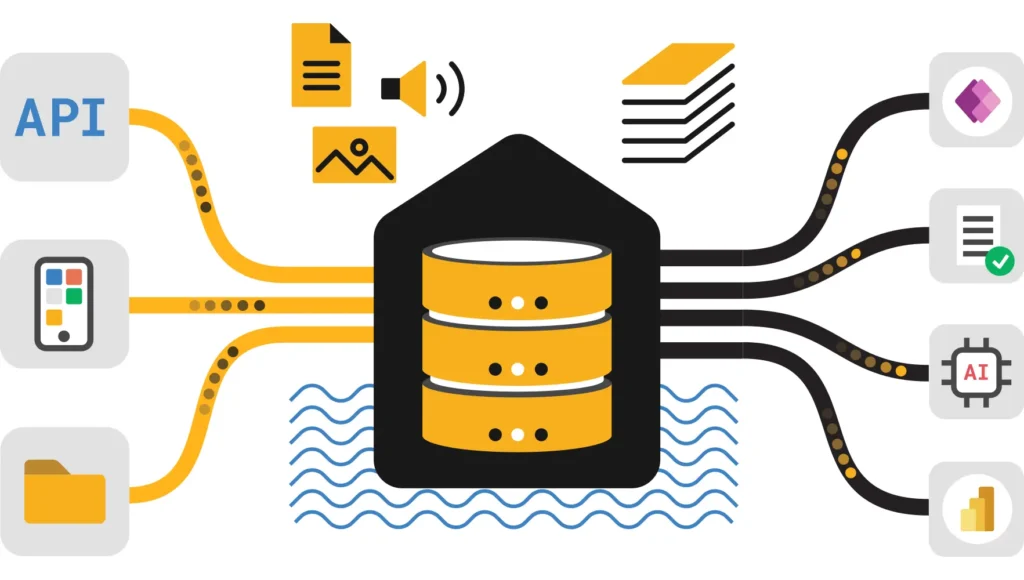

To transform data using notebooks in Microsoft Fabric means utilizing an interactive coding environment (supporting PySpark, Spark SQL, and Scala) to clean, aggregate, and restructure data stored in OneLake. Unlike visual dataflows, notebooks offer full programmatic control, enabling complex logic, machine learning integration, and high-performance processing of large-scale datasets (TB+) using distributed Apache Spark clusters.

Why Transform Data Using Notebooks in Fabric

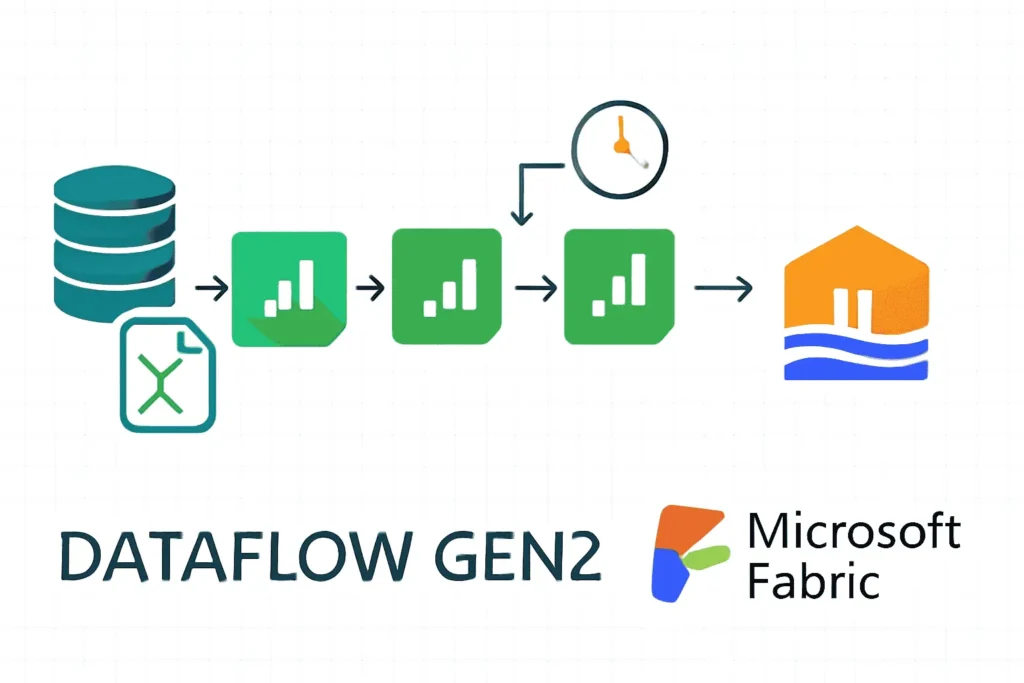

Transform data using notebooks is the gold standard for data engineering in Microsoft Fabric. Unlike Dataflow Gen2 (visual, low-code) or SQL Warehouse (static queries), notebooks provide:

Full Code Control

Write reproducible PySpark, Spark SQL, or Scala transformations with complete version history.

Spark Power

Distribute processing across clusters. Handle MB to TB+ datasets with parallel execution.

Delta Lake Native

Read/write ACID-compliant Delta tables. Merge, upsert, and optimize with ease.

Pipeline Integration

Orchestrate with Data Pipelines. Schedule, parameterize, and monitor at scale.

Transform Data Using Notebooks vs. Alternatives

| Tool | Best For | Skill Level | Scale | Cost |

|---|---|---|---|---|

| Dataflow Gen2 | Visual, low-code mapping & joins | Power Query knowledge | Small–Medium (GB) | High (visuals tax) |

| SQL Warehouse | Static SQL queries, aggregations | SQL expertise | Large (TB+) | Low (optimized) |

| Spark Notebooks | Complex logic, feature engineering, ML | Python/Scala + Spark | Medium–Very Large (TB+) | Low–Medium (optimizable) |

Quick Start: Transform Data Using Notebooks

Prerequisites

- Required Fabric Capacity: F2 or higher

- Required Workspace Role: Admin or Member

- Required Lakehouse: Fabric Lakehouse as destination

- Recommended Git Knowledge: For version control

Step 1: Create & Attach Notebook

Open Workspace

Navigate to your Fabric workspace. Ensure you have Admin or Member permissions.

Create Notebook

Click + New Item → Notebook. Choose default language (PySpark recommended).

Attach Lakehouse

In ribbon: Add → Lakehouse. Select your destination (or create new).

Write First Cell

Read a Delta table and inspect schema.

Step 2: Read Delta Tables

Step 3: Transform & Clean

Step 4: Write Curated Delta Table

5 Production Patterns to Transform Data Using Notebooks

Battle-tested patterns for reliable, production-safe data transformations.

Pattern 1: Schema Enforcement & Data Quality Gates

Intent: Prevent silent failures due to schema drift. Explicit type casting and anomaly capture.

Pattern 2: Idempotent Merges with Delta MERGE

Intent: Make notebooks safe to rerun without duplication. Delta MERGE ensures atomicity.

Pattern 3: Window Functions for Time-Series Features

Pattern 4: Feature Engineering & Versioning

Pattern 5: Incremental Ingestion with Partition Pruning

Transform Data Using Notebooks: Performance Optimization

When you transform data using notebooks at scale, optimize for speed and cost. Focus on reducing data movement (shuffle).

Top 5 Performance Levers

1. Partition Strategically

Split tables into 0.5–2 GB partitions by date or business keys. Massive read speedup for range filters.

2. Filter Early

Push predicates before joins. Predicate pushdown reduces data scanned by 50–99%.

3. Broadcast Dimensions

Broadcast small tables (<100 MB) to avoid shuffle in joins. Dramatic speed improvement.

4. Z-Order / Liquid Cluster

Multi-dimensional clustering for targeted queries. 50–80% read time reduction for common filters.

5. Monitor Spark UI

Profile shuffle, task count, and duration. Identify bottlenecks and tune accordingly.

Detailed: Partitioning for Transform Data Using Notebooks

Broadcast Joins for Transform Data Using Notebooks

Monitor with Spark UI

After running a cell, click Spark application in ribbon → open Spark UI:

- Stages tab: Which stage took longest? Look for shuffle indicators.

- Shuffle read/write: If shuffle > 10× input data size, investigate join/agg.

- Tasks: How many ran in parallel? More = better parallelism.

Notebook UI/UX Best Practices When Transform Data Using Notebooks

Production-grade notebooks are readable, maintainable, and collaborative. Apply these patterns:

1. Header Block with Full Documentation

2. Centralized Configuration Cell

3. Rich Visualizations & Interactivity

4. Error Handling & Logging

Orchestration: Transform Data Using Notebooks at Scale

Interactive development is great; production reliability requires Data Pipelines and CI/CD.

Step 1: Parameterize Your Notebook

Step 2: Add to Data Pipeline

- Create new Data Pipeline in workspace.

- Drag Notebook activity onto canvas.

- In Settings, select your notebook and pass parameters via pipeline variables.

- Add downstream steps (copy, other notebooks) to build workflow.

Step 3: Schedule & Monitor

- Click Schedule in pipeline ribbon.

- Set recurrence (daily, hourly, weekly).

- Configure alerts (email on failure, retry policy, timeout).

- Monitor from Monitor hub → Capacity metrics.

Step 4: CI/CD & Version Control

Store notebooks in Git (Azure Repos, GitHub) and use Fabric CI/CD best practices:

Security & Governance When Transform Data Using Notebooks

Three-Layer RBAC in Fabric

| Layer | Control | Example |

|---|---|---|

| Workspace | Admin/Member/Viewer roles | Only engineers are Members. Analysts are Viewers. |

| Lakehouse | Row-level security (RLS) + column masking | NA managers see only NA data. SSNs are masked. |

| Execution | Service principal / user identity | Notebook runs under your Entra ID; inherits Lakehouse permissions. |

Data Masking for PII

Audit Logging & Lineage

Microsoft Purview integrates with Fabric to track:

- Who accessed what data and when

- What transformations ran

- Data lineage (notebook → Delta table)

Enable Purview integration and automatically capture lineage for compliance audits.

Real-World Recipes: Transform Data Using Notebooks

Recipe 1: Daily Incremental Sales Load + Quality Checks

Scenario: CSV files arrive daily. Load, dedupe, validate, and upsert to curated Delta table.

Recipe 2: Customer Feature Engineering for ML

Scenario: Build versioned feature table from transaction history for ML training.

Recipe 3: Hourly KPI Aggregation for BI

Scenario: Refresh KPI summary hourly for Power BI dashboards.

Comparison: When to Use Notebooks vs. Alternatives

When should you transform data using notebooks vs. Dataflow, Warehouse, or Pipelines?

🔷 Notebooks (This Guide)

- Iterative, complex transformations

- Feature engineering & ML preprocessing

- Multi-step CDC pipelines

- Custom business logic

- 90% cheaper than Dataflows for large scale

- Production-grade reliability

📊 Dataflow Gen2

- Visual, drag-and-drop interface

- Power Query familiar to BI users

- Good for simple mapping/joins

- Limited complex logic

- Higher cost per GB processed

- Best for exploratory projects

🏢 SQL Warehouse

- Static, declarative SQL queries

- Large-scale aggregations

- Best for structured business data

- T-SQL / stored procs

- Optimized for BI queries

- Often more cost-efficient than notebooks for SQL-only workloads

⚙️ Data Pipelines

- Orchestration & scheduling

- Copy activity for data movement

- Chains notebooks & other activities

- Not suitable for standalone transformation

- Essential for production reliability

- Works alongside notebooks

FAQ: Transform Data Using Notebooks

Q1: How do I prevent duplicate data when I transform data using notebooks?

Answer: Always use Delta MERGE with a deterministic business key. MERGE deduplicates within the source before merging to the target, preventing duplicates even on reruns.

Q2: My notebook is slow. How do I debug?

Answer: Click Spark application → Spark UI → Stages tab. Check Shuffle size. If shuffle > 10× input, you have a join/agg bottleneck. Fix by: (1) filtering early, (2) broadcasting small tables, (3) repartitioning.

Q3: Can I transform data using notebooks across multiple Lakehouses at once?

Answer: Yes. Attach primary Lakehouse, but you can read from any Lakehouse using spark.read.format(“delta”).load(“abfss://…”). Use OneLake shortcuts for seamless cross-workspace access.

Q4: Is it safe to run OPTIMIZE and VACUUM in production?

Answer: OPTIMIZE: Safe anytime (compacts files, readers unaffected). VACUUM: Schedule during maintenance windows; avoid while queries run. Removes old files after N days (default 7).

Q5: Should I use Python or Spark SQL in notebooks?

Answer: PySpark (Python): Better for complex logic, ML, pandas integration. Spark SQL: Faster for pure SQL workloads. Mix both in one notebook using `%%sql` magic commands.

Q6: How do I version-control notebooks?

Answer: Store in Git (Azure Repos, GitHub). Use Fabric CI/CD for PR validation. Deploy via deployment pipelines or Terraform.

Q7: Can I use R or Scala when I transform data using notebooks?

Answer: R: Limited support (SparkR). Not recommended. Scala: Supported, but Python is the industry standard. Stick with Python unless you need low-level Spark optimization.

Q8: How do I cost-optimize notebooks?

Answer: (1) Partition tables & filter early, (2) Broadcast small tables, (3) Use Liquid Clustering, (4) Schedule during off-peak, (5) Monitor Capacity Metrics for spikes.

References: Transform Data Using Notebooks

Official Microsoft Documentation

- How to Use Microsoft Fabric Notebooks – Official setup & API reference

- Spark in Microsoft Fabric – Runtime, compute pools, optimization

- Delta Lake Optimization – V-Order, OPTIMIZE, MERGE syntax

- Spark Best Practices – Official security, capacity, development guidance

- DP-600 Exam Guide – Fabric data engineer certification

Related UltimateInfoGuide Tutorials

- Free Microsoft Fabric Tutorial Series – Curated Fabric learning path

- Fabric Lakehouse Tutorial – Storage, Delta tables, table design

- Data Pipelines in Fabric – Orchestration, scheduling, monitoring

- Dataflow Gen2 in Fabric – Low-code alternative for mapping

- Spark Shuffle & Partitions – Deep dive on performance

- Data Mirroring in Fabric – Real-time sync from external sources

- Fabric Capacity Optimization – Cost management & scaling

- Data Governance in Fabric – Lineage, security, compliance

- Agentic Data Engineering – AI-assisted code generation

- Fabric Pricing Calculator – Cost estimation tool

Community & External Resources

- Delta Lake Documentation – Official format & merge reference

- Apache Spark Docs – SQL, RDD, DataFrame APIs

- Databricks Academy – Free Spark & Delta courses

- MS Learn: Prepare & Transform in Lakehouse – Hands-on module

- Reddit: r/MicrosoftFabric – Community Q&A

Ready to Transform Data Using Notebooks?

Start with a single daily ETL notebook. Run via pipeline. Monitor. Iterate. Once confident, scale to multi-source transformations, feature engineering, and ML pipelines.

Notebooks in Fabric are free to develop—you pay only for compute. Iterate rapidly. Build with confidence.