Data Mirroring in Fabric – Complete 2026 Guide

Complete 2026 guide to real-time data synchronization, CDC implementation, and production-grade mirroring patterns for Microsoft Fabric.

Updated January 2026What is Data Mirroring in Fabric?

Data Mirroring in Fabric is a zero-ETL, real-time replication service that continuously synchronizes data from sources like Azure SQL, Snowflake, and PostgreSQL into OneLake. It automates Change Data Capture (CDC) to provide fresh analytics data without building complex pipelines.

What is Data Mirroring in Fabric?

Data Mirroring in Fabric is a near-real-time, continuous replication capability that synchronizes transactional data from enterprise sources directly into Microsoft Fabric’s OneLake. Consequently, analytics teams gain immediate access to fresh operational data without building complex ETL pipelines.

Fundamentally, mirroring operates in two phases:

- Initial Snapshot: Captures a consistent baseline from the source database

- Continuous CDC (Change Data Capture): Streams inserts, updates, and deletes in near real-time

Why Mirroring Matters

Eliminates months of ETL development. Maintains data freshness. Preserves transaction order semantics. Enables real-time analytics without operational overhead—critical for financial reporting, inventory tracking, and anomaly detection.

Key Capabilities (January 2026)

Sub-Minute Latency

Changes from source typically appear in Fabric within 30–60 seconds, enabling near-real-time dashboards and alerting.

Managed Infrastructure

Fabric handles snapshots, CDC configuration, and data delivery. No custom CDC code required.

Multi-Source Support

Azure SQL, PostgreSQL, MySQL, Kafka, on-premises databases, and custom sources via Open Mirroring.

Delta Lake Integration

Data lands in Delta Lake automatically, enabling ACID guarantees and seamless integration with notebooks and SQL Warehouse.

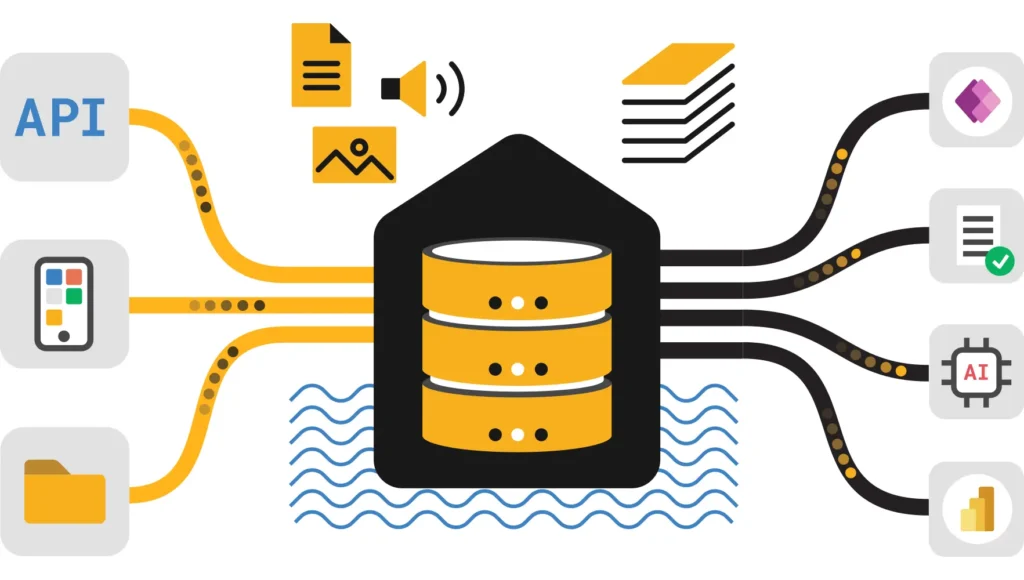

Supported Sources & Architecture

Data Mirroring supports 15+ enterprise sources and custom systems. Choose the approach that matches your infrastructure:

Native Connectors (Managed by Microsoft)

- Azure SQL Database & Managed Instance — Native CDC via transaction log with sys.dm_change_tracking

- Azure Database for PostgreSQL — Logical replication via WAL (Write-Ahead Log)

- Azure Database for MySQL — Binlog-based CDC with continuous streaming

- SQL Server (on-premises) — Via data gateway with SQL Server Agent CDC jobs

- Azure Synapse Dedicated SQL Pools — Dedicated connector for warehouse syncing

Open Mirroring (Custom & Multi-Cloud)

For systems not covered by native connectors, Open Mirroring provides a flexible API and SDK for building custom adapters:

- Amazon RDS (PostgreSQL, MySQL) — Via open mirroring with Fabric-provided SDK

- Google Cloud SQL — Cross-cloud replication using open mirroring adapters

- Apache Kafka & Event Streams — Ingest event logs and transform into mirrored tables

- Custom & Legacy Systems — JDBC drivers, ETL tools, and API-based adapters

Architecture Overview

Types of Mirroring & Use Cases

Choose the mirroring mode that aligns with your latency, control, and complexity requirements:

1. Source-Native Mirroring (Recommended for Cloud Databases)

Best for: Azure-hosted databases with native CDC support (Azure SQL, PostgreSQL, MySQL).

- Managed end-to-end by Fabric

- Automatic snapshot + CDC scheduling

- Minimal configuration overhead

- Sub-minute latency (typically 30–60 seconds)

2. Open Mirroring (Flexible & Extensible)

Best for: Custom sources, legacy systems, multi-cloud, or Kafka streams.

- Custom adapter development using Fabric SDK

- Full control over transformation logic

- Support for any CDC mechanism (logs, APIs, webhooks)

- Suitable for complex business logic

3. Metadata Mirroring (Schema & Governance)

Best for: Synchronizing schema changes and metadata across Fabric workspace.

- Schema auto-discovery and registration

- Data lineage and impact analysis

- Governance catalog population

Setup & Prerequisites

Prerequisites Checklist

- Required Fabric Capacity: F64 or higher (P1+ equivalent) with available compute.

- Required Source Connectivity: Network access from Fabric to source (public IP or VNet peering).

- Required CDC Enabled on Source: Enable transaction log / WAL / binlog CDC on your database.

- Required Credentials & Permissions: Service account with read + CDC permissions on source.

- Recommended Data Gateway: For on-premises sources, install and configure the Fabric data gateway.

- Recommended Schema Validation: Document source schema and expected column types.

Step-by-Step Setup

Create Lakehouse & Destination Database

In Fabric, create a new Lakehouse. This will host your mirrored tables. Plan your schema upfront: one table per source entity.

Enable CDC on Source

Azure SQL: ALTER DATABASE [YourDB] SET CHANGE_TRACKING = ON

PostgreSQL: Enable logical replication in postgresql.conf

MySQL: Set binlog_format = ‘ROW’ in my.cnf

Configure Mirroring Connection

In Fabric, open the Lakehouse and select “New Mirrored Table”. Choose your source connector (native or open mirroring). Enter credentials and connection string. Test connectivity.

Select Tables & Start Snapshot

Choose which tables to mirror. Start with small tables (< 100GB) to validate. Monitor snapshot progress in the Mirroring Hub.

Validate & Enable CDC Streaming

Once snapshot completes, enable CDC streaming. Observe row counts and latency. Create alerts for replication lag.

How Mirroring Works Under the Hood

Phase 1: Initial Snapshot

The snapshot phase establishes a consistent baseline by reading source tables at a specific point in time. Importantly, this captures the schema and all current rows. Fabric then writes them to Delta tables in OneLake with a _timestamp column marking the snapshot time.

Phase 2: Continuous CDC

After snapshot completion, Fabric continuously reads the source’s change log (transaction log, WAL, binlog) and applies changes in strict order. Each change is idempotent: inserts become UPSERT operations, deletes are marked or removed, and updates merge with existing rows.

Type Mapping & Schema Handling

Fabric automatically maps source column types to Delta Lake equivalents. However, some mappings are ambiguous (e.g., SQL Server NVARCHAR(MAX) → Spark STRING). Handle these via:

- Explicit casting in mirroring config (if supported)

- Post-load notebooks that cast columns to desired types

- Custom adapters (for open mirroring)

Handling Schema Changes

When the source schema changes (new column, dropped column, type change), mirroring behavior depends on configuration:

- Add Column: New column appears in mirrored table with nulls for historical rows

- Drop Column: Column retained in mirrored table (data not deleted) for backward compatibility

- Rename Column: Requires manual intervention; coordinate with governance and consumers

- Type Change: May cause validation errors; route to anomalies table for review

Design Patterns & Best Practices

Pattern 1: Landing → Transform → Serve

Separate raw mirrored data (landing) from curated business tables (serving). This improves traceability, error handling, and allows safe reruns.

Pattern 2: Idempotent Delta Merge

Use Delta MERGE to ensure downstream inserts/updates are idempotent (safe to replay):

Pattern 3: Late Arriving Facts

Mirrored data may occasionally arrive out of order or late. Handle this with:

- _source_timestamp: Capture source transaction time (not ingestion time)

- _batch_id: Link changes to snapshot or CDC batch for reconciliation

- _confidence_level: Flag rows arriving after a threshold (e.g., > 5 min late)

Pattern 4: Anomaly Detection & DLQ

Route data quality issues to a Dead Letter Queue (DLQ) for manual review:

Best Practice: Freshness SLAs

Define and monitor replication lag SLAs:

- Critical tables: < 1 minute lag (e.g., orders, payments)

- Important tables: < 5 minute lag (e.g., customers, products)

- Reference tables: < 1 hour lag (e.g., regions, categories)

Operational Monitoring & Troubleshooting

Key Metrics to Track

| Metric | Healthy Range | Action if Breached |

|---|---|---|

| Replication Lag | 30–90 seconds | Investigate network latency, CDC backlog, Fabric capacity |

| Snapshot Duration | Depends on table size (1–100 GB = 5–60 min) | If > expected, check source locks and network throughput |

| Failed Transactions | 0 (per hour) | Review error logs; restart connector if transient |

| Row Count Match (source vs sink) | 100% (after CDC catch-up) | Run reconciliation query; identify missing/duplicate rows |

| Capacity CU Usage | < 80% peak during ingestion | Throttle snapshots, schedule during off-peak, scale capacity |

Monitoring Setup (Fabric Monitor Hub)

- Navigate to Workspace Settings → Mirroring → Monitor

- View per-table status, last snapshot date, CDC lag

- Set alerts for replication lag > 5 minutes, failed CDC batches

- Export metrics to Power BI for trending

Reconciliation Query

Common Issues & Fixes

Fix: Scale Fabric capacity, check for source locks, reduce CDC batch size.

Fix: Review error logs for transient vs. persistent errors; add retry logic or increase timeout.

Fix: Check for duplicate key violations, deleted rows not being marked, or filter conditions in mirroring config.

Security, Governance & Lineage

Access Control (Least Privilege)

- Mirroring Setup: Only workspace admins or data engineers with mirroring permissions

- Read Access: Analysts and BI teams: Reader access to curated tables only

- Raw Mirrored Tables: Restrict to data engineering; prevent direct analytics queries

- Credentials: Use Azure Key Vault service principals; never hardcode passwords

Data Masking & Sanitization

For PII (Personally Identifiable Information), apply masking in transformation notebooks:

Lineage & Data Catalog Registration

Register mirrored datasets in the Fabric Data Catalog:

- Business Glossary: Tag columns with business terms (e.g., “Order Date” = order_ts)

- Impact Analysis: Track which dashboards and reports consume mirrored data

- Change Notifications: Alert downstream users when schema changes

- Data Quality Rules: Register freshness, completeness, and accuracy SLAs

Audit Logging

Fabric automatically logs mirroring activities:

- Snapshot start/completion

- CDC streaming status changes

- Failed transactions and errors

- Access to mirrored tables (via query logs)

Practical Recipes & End-to-End Examples

Recipe 1: Real-Time Sales Dashboard

Recipe 2: ML Feature Store from Transactional Data

Recipe 3: Handling Late-Arriving Facts

Recipe 4: Multi-Source Consolidation

Frequently Asked Questions – Data Mirroring in Fabric

Does mirroring support my on-premises database?

Yes, via the Fabric data gateway. Install and register the gateway in your on-premises network. Mirroring will connect through it securely. Confirm your DBMS version is compatible with the gateway’s CDC capabilities (SQL Server 2016+, PostgreSQL 10+, MySQL 5.7+).

What latency should I expect?

Sub-minute for healthy setups (30–90 sec). Snapshot duration depends on table size: 1 GB ≈ 1–2 min, 100 GB ≈ 30–60 min. After snapshot, CDC latency is typically 30–60 sec depending on network and source transaction volume.

Can I mirror to multiple workspaces?

Not directly. Each mirroring connection targets a single Fabric workspace. Workaround: Set up multiple mirroring connections in different workspaces pointing to the same source, or use OneLake shortcuts to share mirrored data across workspaces.

What happens when the source schema changes?

Mirroring adapts automatically for additions and type-safe changes. For breaking changes (column drop, type incompatibility), manually update the mirroring configuration and restart CDC. Coordinate with downstream consumers (dashboards, notebooks) before applying schema changes.

How do I backfill historical data?

Mirroring always starts with a full snapshot. If you need data before the snapshot, either: (a) restore a backup on source and re-snapshot, or (b) use a separate historical data load tool (e.g., Copy Activity or Dataflow Gen2) to fill in older periods.

Can I filter rows during mirroring?

Native mirroring doesn’t support WHERE clauses. If you need filtering, apply it post-load in a transformation notebook. Alternatively, use Open Mirroring with custom adapters to filter at ingestion.

What’s included in the Fabric price for mirroring?

Mirroring capacity usage is charged as part of your Fabric capacity bill. There’s no separate per-GB ingestion fee. Use the Fabric Pricing Calculator to estimate your costs.

How does mirroring compare to Data Pipelines + Copy Activity?

Mirroring is simpler and more automated (no custom CDC logic). Copy Activity is more flexible for complex transformations. Ideal: Mirroring for raw replication, Copy Activity for selective loads and transformations.

References & Related Resources

Official Microsoft Fabric Documentation

- Mirroring Overview — Complete reference for all capabilities

- Open Mirroring — Custom connector development guide

- Supported Connectors — Full list of sources and prerequisites

- Monitoring & Troubleshooting — Metrics and alerting setup

Related Fabric Tutorials (UltimateInfoGuide)

- Fabric Lakehouse Tutorial — Understand Delta Lake, shortcuts, and sharing

- Data Pipelines in Fabric — Orchestrate mirroring with Copy Activity and transformations

- Transform Data Using Notebooks — Build validation and enrichment logic

- Dataflow Gen2 in Fabric — Alternative to notebooks for visual transformations

- Data Governance in Fabric — Lineage, catalog, and compliance setup

Comparison & Advanced Topics

- Fabric vs. Azure Synapse — When to choose mirroring in Fabric vs. external CDC tools

- Lakehouse vs. Warehouse Comparison — Architecture decision framework

- Agentic Data Engineering — Automate mirroring monitoring and governance with AI

- Data Observability vs. Quality — Monitor mirrored data freshness and accuracy

Tools & Calculators

- Fabric Pricing Calculator — Estimate mirroring costs by capacity and throughput

- Capacity Optimization Guide — Right-size your Fabric capacity for mirroring workloads

Ready to Enable Data Mirroring?

Start with a low-risk source (< 100 GB), validate replication lag and data quality, then scale to mission-critical systems. Combine mirroring with notebooks and pipelines to build production-grade analytics.